Your Documentation Is Probably Teaching AI the Wrong Thing

What Suraj Desai’s talk about AI-powered retrieval quietly revealed about the future of technical writing

Tech writers have spent decades obsessing over whether humans can find information.

Can customers search for it?

Can support agents navigate it?

Can the PDF open without Adobe Reader having an emotional collapse?

Meanwhile, AI showed up, kicked the office door open like a kangaroo hopped up on espresso, and changed the entire game.

Now the question isn’t whether humans can find the information we create. It’s whether machines can interpret it correctly before confidently lying to everyone.

That’s the hidden message buried inside Suraj Desai’s presentation on AI-powered retrieval systems.

At first glance, the talk looked deeply technical. Retrieval pipelines. Vector databases. Agentic loops. BM25 ranking. Taxonomy structures. Enough acronyms to make a tech writer briefly consider a career in artisanal candle-making.

But underneath all that infrastructure talk was something much more important:

Technical documentation is no longer just content. It’s training material for reasoning systems.

And if our docs are messy, ambiguous, contradictory, poorly structured, or missing context, AI systems don’t simply “struggle” with it. They hallucinate with confidence.

The LLM Likely Isn’t The Problem

One of the most important things Suraj said during the webinar was this:

“The LLM didn’t fail. The retrieval did.”

That sentence should probably be tattooed somewhere inside every enterprise knowledge management initiative currently stapling a chatbot onto ungoverned documentation and hoping for the best.

Because most AI failures in documentation systems are not actually language failures.

They’re retrieval failures.

The AI isn’t inventing nonsense because it woke up feeling creative. It’s inventing nonsense because the retrieval system handed it incomplete, conflicting, or irrelevant information.

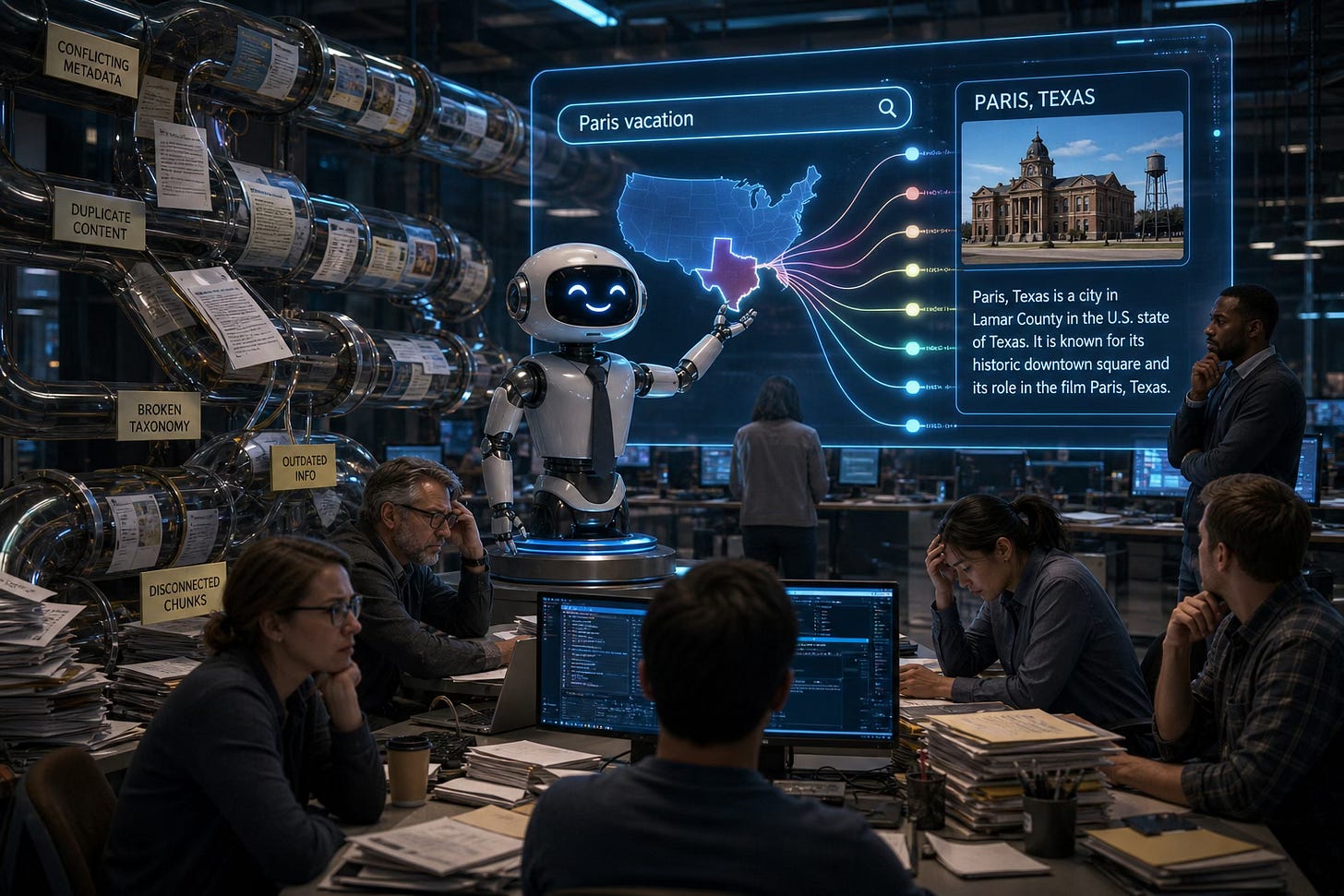

In using traditional docs, humans compensate for weak structure surprisingly well. We:

Infer meaning

Notice context clues

Realize “Paris” probably means France if the conversation is about European vacations

Machines don’t do that naturally. Which means every ambiguity tech writers leave in the docs becomes a possible retrieval failure later. And retrieval failures scale beautifully. That’s the terrifying part.