When Confluence Starts Groaning: A Practical Checklist For Knowing It’s Time To Upgrade Your Internal Docs Stack

At some point, your startup wiki stops being helpful and starts being a liability — the tricky part is noticing before it quietly wrecks everything

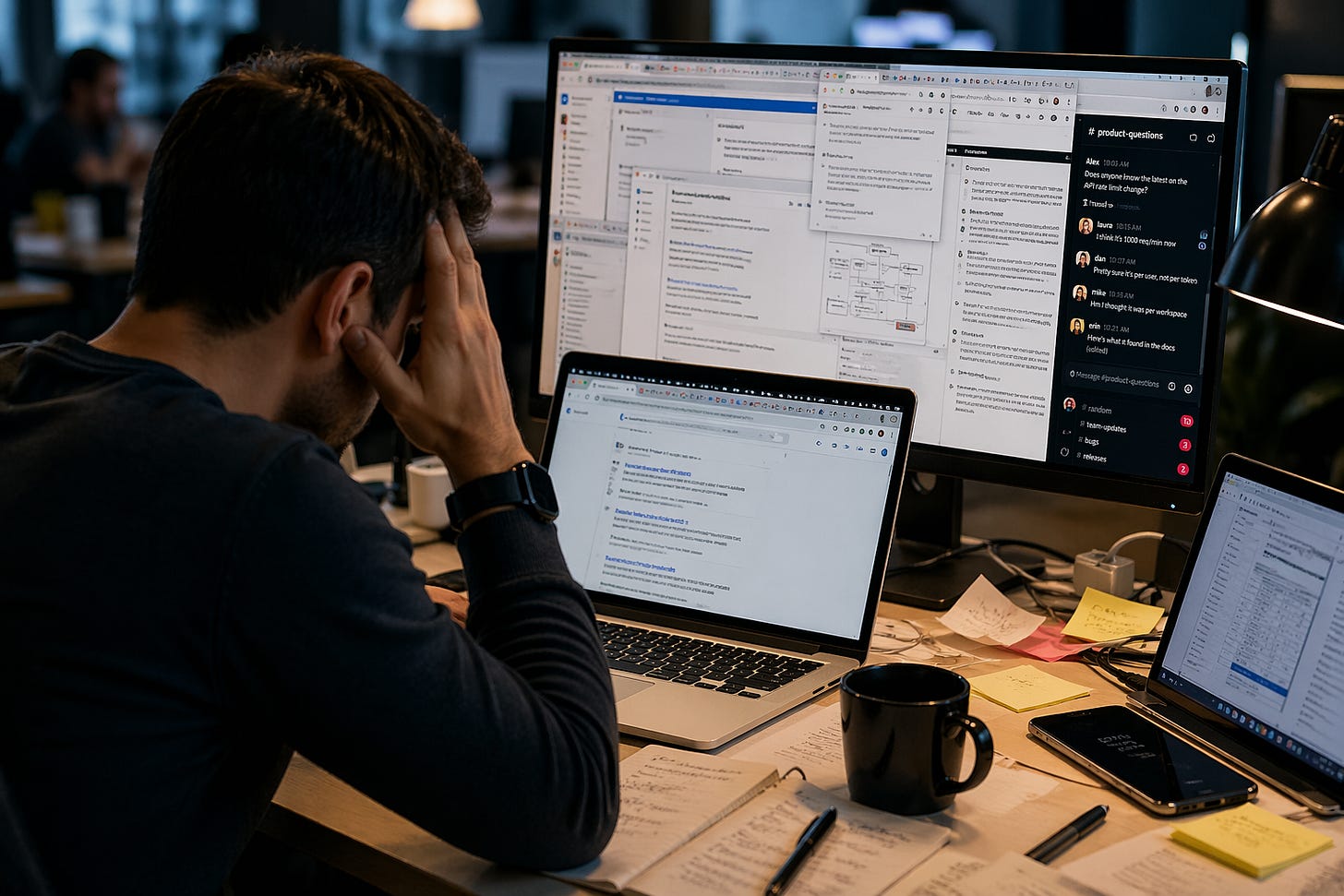

You go 👀 looking for a simple answer. Not a philosophical one. Not a “let’s align on strategy” answer. Just a plain, factual, “how does this feature work?” kind of answer.

You search your internal docs in Attlassian Confluence and get six results. Three are outdated. One contradicts another. One links to a Slack thread from 2022 where someone named “Dan (Contractor)” says, “I think this is how it works?” The last one looks promising (until you realize it describes a feature your company quietly killed last quarter.)

So you do what everyone does. You ask for help in Slack.

Five people respond. Two disagree. One says, “Let me check.” Another drops a link… to one of the six pages you already read — the same one you linked to in your Slack query.

And just like that, your documentation system has completed its transformation from “source of truth” to “suggestion box.”

This didn’t happen overnight. It never does.

Why Confluence Worked (Until It Didn’t)

Let’s be fair. Confluence didn’t betray us. It did exactly what we needed at the time.

It’s fast and somewhat flexible. It lets pretty much anyone write nearly anything, almost anywhere, at any time. In the early days, that felt like progress. We were moving quickly, building features, hiring people, figuring things out as we went. Structure felts like a luxury we couldn’t afford back then.

And for a while, that was true.

When our team was small and our content footprint was manageable, we didn’t need governance (or so we thought); we needed speed. We wanted a place to put things so they wouldn’t disappear into someone’s brain forever.

Confluence was great at that. Right up until it wasn’t.

The Moment Things Start to Slip

The shift here is subtle. We didn’t wake up one morning and declare, “Our documentation system has failed us.” Instead, we started noticing small things.

People stopped trusting the docs. They began double-checking everything. They asked questions they shouldn’t have to ask and when they figure out what they needed to know they often created new pages in the wiki instead of updating old ones because it’s faster than figuring out what’s already there.

Our content was growing, but its usefulness wasn't.

That’s the moment our “flexible” system becomes an unmanageable one.

The Signs You’ve Outgrown Your Setup

What should we do, you ask? Well, first, we don’t need a formal audit to know something’s off. We just need to pay attention to the patterns that can help us identify the obstacles and challenges our internal documentation content hairball introduces.

Do we know if there’s a problem?

We can often easily see the signs of internal documentation problems. Docs contradict themselves. There’s no clear source of truth, just a collection of “maybe, probably correct” answers. Pages linger long after they’ve expired, like leftovers no one wants to claim. Subject matter experts rewrite entire sections instead of updating them, because it’s easier than untangling what came before.

Search becomes a scavenger hunt. Results are plentiful but not helpful. Navigation reflects how our org chart is structured, not how people actually think. Users bypass documentation entirely and head straight to Slack, where answers are fresher — even if they’re not better.

Scaling becomes awkward. The same information appears in multiple places because reuse isn’t a thing our team has made an effort to make systematic — it’s a wish, at best. Localization feels like a fantasy. Publishing content to different channels involves copying, pasting, and a quiet prayer that nothing breaks.

Governance, if it exists at all, is mostly implied. Anyone can publish anything. Review cycles are optional. Versioning is… optimistic.

And then there’s the AI problem.

We point a generative AI tool at our documentation, expecting magic. 🪄 What we get instead is a confident stream of half-truths, contradictions, and occasionally inspired fiction. The AI isn’t broken. It’s doing exactly what it’s supposed to do — summarizing messy and ungoverned input into equally messy output.

Turns out, chaos in equals chaos out.

What It’s Actually Costing You

Here’s the part no one likes to say out loud: we’re already paying for this problem.

We’re paying in time; every minute someone spends searching, second-guessing, or rewriting content that already exists somewhere. We’re also paying in expensive onboarding delays, as new hires struggle to separate fact from folklore.

And, we’re paying in support costs, because people can’t find answers that should be obvious. We’re also paying in product friction, when users don’t understand what we’ve built well enough to use it or explain it to others.

And if we’re exploring AI (who isnt?), we’re paying there, too; unreliable content doesn’t magically become reliable when we run it through a language model. It just becomes faster at being wrong.

So What Are “Professional Tools,” Really?

This is where people get nervous, because it starts to sound like a tooling conversation. And tooling conversations have a reputation for turning into expensive shopping trips.

But this isn’t about buying something shiny. It’s about capabilities.

At a certain scale, we need content that’s structured, not just written. Modular, not monolithic. Reusable, not endlessly duplicated.

We need workflows that ensure our content is reviewed, approved, and maintained; not just created and forgotten.

We need metadata and taxonomy so our information can be found, filtered, and delivered in ways that make sense to our users.

We need the ability to publish the same content across multiple channels without copying and pasting it into oblivion. And increasingly, we need content that machines can understand (not just humans) so AI systems can retrieve accurate, grounded answers instead of improvising and getting it wrong.

There are different ways to get there. Component content management systems. Docs-as-code environments. Knowledge delivery platforms.

The specifics matter less than the outcome.

We’re moving from “a place where we store information” to “a system that manages, governs, and delivers knowledge.”

Making The Business Case Without Sounding Like We Just Want New Toys

If we walk into a meeting and say, “Our team needs a new documentation platform,” we’ll get exactly the reaction we deserve. 🫤 Blank stares. Budget concerns. Someone asking if this can wait until next year (or the year after).

So don’t pitch a tool.

Start with evidence. Show the broken pages. The conflicting information. The Slack threads that exist because the docs failed.

Then translate that into cost. Not abstract cost — real cost 💰. Time lost. Support tickets created. Delays introduced.

Tie it to business goals like faster onboarding, improved customer experience, more reliable AI initiatives, or reduced support burden.

And for the love of all things sensible, don’t propose a massive migration as the opening move. Start small by piloting something. Prove that a better approach produces better outcomes by making the problem clearly visible to those who should care before trying to sell the solution.

A Quick Reality Check About Migration

Let’s not pretend this part is fun. Migration means cleanup and confronting the fact that some of our content shouldn’t make the journey. It means defining governance (where none existed before) and asking people to change how they work.

The reality here: There will likely be resistance. There’ll be moments when you question your life choices. But there’s also an upside.

Migration is the one time we’re allowed — expected, even — to fix what’s broken instead of working around it. And we don’t have to fix everything at once. In fact, we shouldn’t.

One Question to Ask Yourself Before You Do Anything Else

If our entire team disappeared tomorrow, would someone else be able to rely on our documentation to run the business? Not “eventually figure it out.” Not “after asking a few questions.” Actually rely on it.

If the honest answer involves Slack, guesswork, or crossing your fingers, well … there’s work to do.

The Quiet Shift No One Talks About

At some point, documentation stops being a side effect of building a product and becomes part of the product itself (whether anyone recognizes that or not). When that happens, the tools we use (and the way we manage content) stop being a convenience decision. They become a business decision.

And that’s usually right around the time Confluence starts to groan.

Not because it’s broken — but because we’ve asked it to do a job it was never designed to handle at scale.

We don’t notice the shift right away. We just feel the friction and watch as the extra minutes spent searching tick by. We also feel the second-guessing and the quiet erosion of trust, until one day, the system we relied on to keep us aligned is the thing slowing us down.

At that point, the question isn’t whether we need to change. It’s how much longer we’re willing to pay for not changing. 🤠

Scott, the symptoms you describe are familiar to anyone who has worked through a wiki maturity cycle. Contradictory pages, stale search results, and the drift of authoritative content into a Slack archaeology problem. These are real and worth taking seriously.

The diagnosis is where the argument goes wrong. The piece treats wiki rot as a property of the tool, and the resolution as a category upgrade from "place to store information" to "system that manages, governs, and delivers knowledge." That framing's been with us since paper records management, and it's been wrong every time. The tool doesn't produce the chaos. The organisation does. Replace Confluence with a component content management system, a docs-as-code pipeline, or any of the knowledge delivery platforms currently being marketed, and within eighteen months, a team without governance practices will produce the same scattered, contradictory, half-maintained corpus. The platform changed. The ownership model, review cadence, taxonomy discipline, and authoring incentives didn't.

This is the consistent finding across forty years of knowledge management and organisational behaviour research, from Nonaka onwards. Documentation problems are organisational problems. Tools amplify whatever authoring culture is already in place.

It's also worth resisting the framing that runs throughout the piece, where Confluence "groans" and AI produces "occasionally inspired fiction." Software doesn't betray anyone. People configure, govern, and maintain software, or fail to. The personification flatters everyone except the actual cause.

A second point worth making clearly: Confluence isn't a knowledge management system. It's a collaborative wiki. Calling it a KMS, and judging it as one, sets up a category error the rest of the argument depends on. A KMS, properly understood, implies deliberate practices around capture, classification, lifecycle, retrieval, and transfer of tacit and explicit knowledge. Confluence supports authoring and search. Everything else has to be built around it, in any tool. How an organisation frames its KMS, narrowly as explicit-document management or broadly to include communities of practice, mentoring, and after-action review, changes the tooling requirement entirely. Most teams haven't done that framing work before signing a procurement contract, which is a large part of why the contract eventually disappoints them.

On the SaaS question. For a non-trivial subset of organisations, Confluence and the larger family of subscription documentation platforms are a regrettable spend. Per-seat pricing scales with headcount, customisation is bounded by the vendor's roadmap, data sovereignty is partial at best, and the eventual migration cost is a liability that quietly accrues and rarely appears on the balance sheet. A self-hosted, modestly customised wiki, whether MediaWiki, BookStack, Wiki.js, or Outline, delivers the same authoring, search, role-based access, audit trail, and integration surface, with full control over schema, metadata, and lifecycle, at a fraction of the multi-year cost. The trade is engineering time against subscription dollars. For organisations with even modest internal IT capacity, the math is usually on the self-hosted side. The "professional tools" framing in the piece tends to obscure this rather than examine it.

None of which makes Confluence the wrong choice in every case. For small teams, hosted and low-friction is a reasonable fit. The mistake is assuming that the tool category determines outcomes at scale. It doesn't. What determines outcomes is governance, ownership, and authoring incentives. The organisations whose documentation holds up tend to have those in place first, and the platform second.

Byron