What If The Biggest Issue With AI In Your Organization Isn’t The AI Itself?

Your AI strategy is only as reliable as the information your organization is willing to stand behind

Guest contributor: Jason Kaufman, Zaon Labs

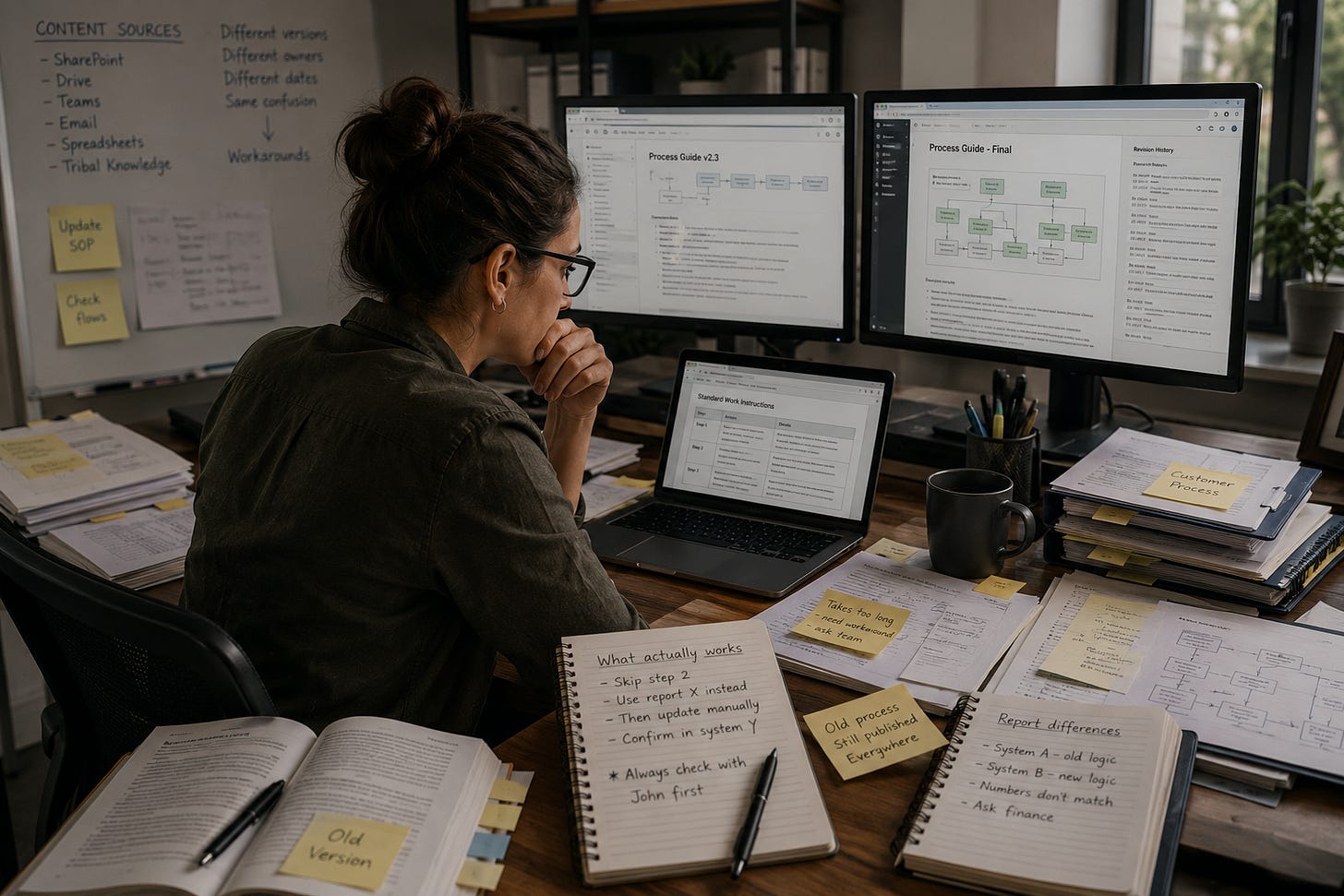

I keep coming back to a moment I’ve seen play out over and over again throughout my career, long before generative AI entered the conversation. Someone opens a document, reads a section, pauses, and then says, almost instinctively, “I’m not sure this is right.”

It is a small moment, easy to overlook, but it carries more weight than most people realize. That hesitation is often the first visible sign that something deeper is off. Once that doubt enters the system, everything built on top of it becomes less reliable.

It is tempting to look at AI when things start to feel inconsistent. The outputs are uneven. Sometimes outdated. Occasionally just wrong. The natural reaction is to focus on the technology. Improve the model. Refine the prompts. Invest in better infrastructure. I had a version of that reaction myself when generative AI first emerged. After spending years building businesses around content and knowledge management, my initial instinct was that this shift might disrupt everything. But the more I worked with these systems, the more it became clear that AI was not introducing a new problem. It was exposing one that had been there all along.

Most organizations have been operating with fragmented knowledge for years. Different teams maintain different versions of the same information. Content gets updated in one system and missed in another. Context evolves, but documentation often stays frozen in time.

Over the past twenty five years, working with enterprises on knowledge management and content strategy, I have seen this pattern repeat itself in different forms, but with the same underlying issue.

People adapt in practical ways

They ask colleagues instead of trusting systems. They may keep their own notes. They often build informal sources of truth that feel more dependable than the official ones. These workarounds keep things moving, but they slowly erode confidence in the systems that were meant to support them.

When AI enters that kind of environment, it behaves very differently than a person would. It does not navigate uncertainty. It tries to resolve it. It pulls from multiple sources, reconciles conflicting inputs, and produces an answer with confidence. That is where the issue becomes visible in a way it never was before. The uncertainty that used to sit quietly in the background is now embedded directly in the output. And people notice.

Most organizations respond by trying to improve how information is structured. They invest in better organization, better retrieval, more sophisticated systems. All of that matters. But it rests on an assumption that often goes unchallenged, that the underlying information is correct. In my experience, that is rarely the case. The real gap is not structural. It is factual. Which statements are accurate, which are outdated, and which are missing context that changes their meaning. Until those questions are addressed, AI will continue to reflect the inconsistencies within the knowledge it consumes.

[Webinar] Destroy Enterprise Knowledge Chaos for Hallucination-Free GenAI

This is where the idea of Truth Curation begins to take shape. Not as another layer of organization, but as a process for validating what is actually true across an organization.

AI can be used to scan content at scale and surface conflicts, inconsistencies, and gaps. It can highlight where information does not align or where context is incomplete. But resolution still depends on human judgment.

Subject matter experts step in to validate, revise, and align what the system surfaces. They update what is incorrect, clarify what is ambiguous, and remove what is no longer relevant. It becomes a more focused use of expertise, applied where it has the most impact.

What follows is not dramatic, but it is meaningful. Employees begin to trust the systems they rely on. They spend less time double checking information. They rely less on informal workarounds. And AI starts to perform the way it was expected to, not because the model changed, but because the foundation did.

After more than two decades working at the intersection of knowledge management and AI strategy, this is the pattern I keep coming back to. Organizations do not suffer from a lack of information. They suffer from a lack of trusted information.

A useful shift in perspective is this. Instead of asking how to make AI more effective, ask whether the information it relies on is something your organization can confidently stand behind. That is where meaningful progress begins. 🤠

Jason Kaufman is an AI strategist, entrepreneur, and technical communication expert with more than 20 years of experience in knowledge management, enterprise AI integration, and content strategy. He is the CEO and co-founder of Zaon Labs and serves as President of Irrevo, where he helps organizations apply generative AI to content operations, enterprise knowledge management, and workflow automation.

Kaufman is a frequent speaker and educator on AI-enabled content strategy, prompt engineering, and the future of technical communication.