The “Car Wash Error” — Why AI Makes Your Documentation Sound Better (And Be Wrong)

AI can polish your content until it shines — and quietly remove the parts that matter most

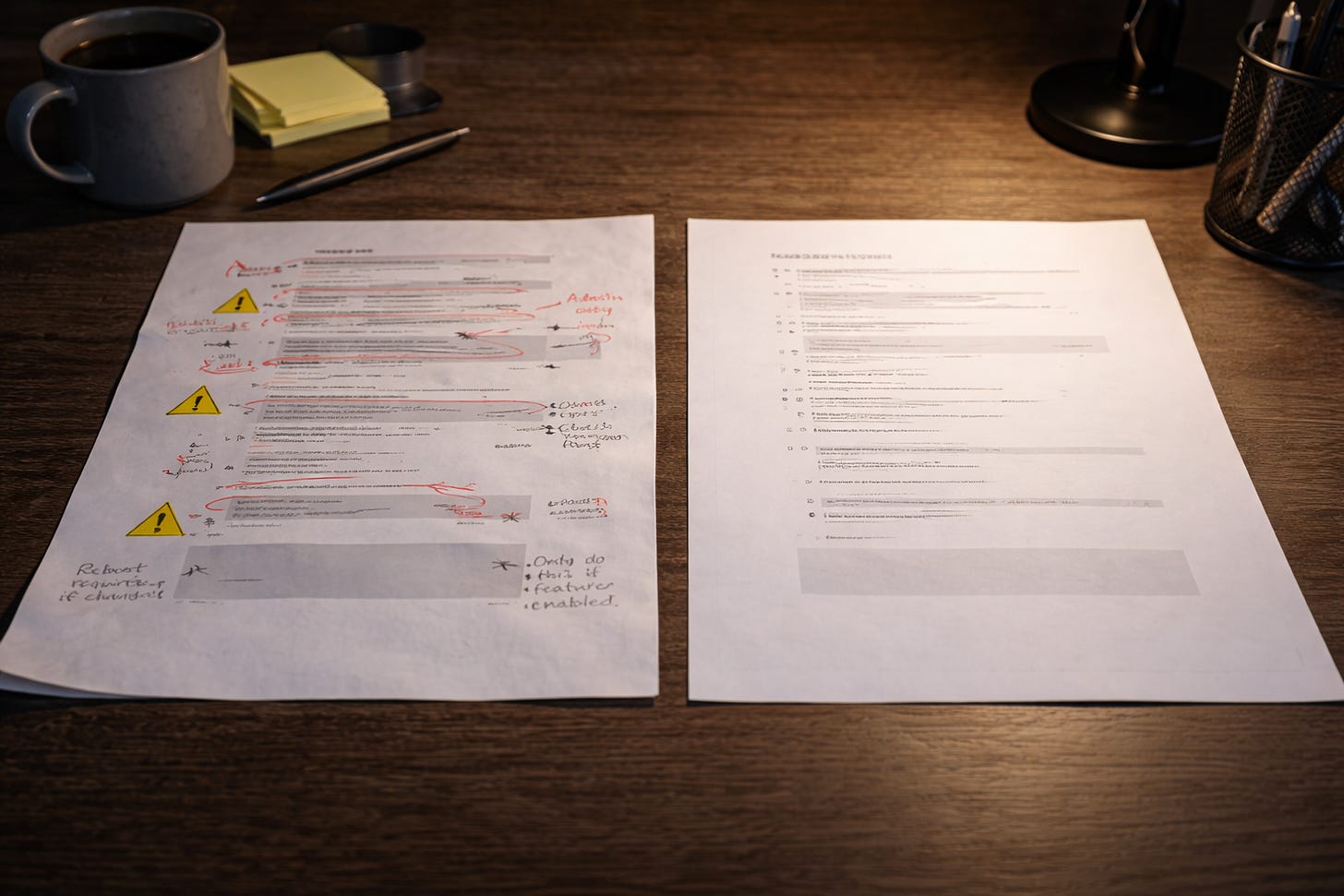

There’s a moment that happens now with unsettling regularity. You paste a chunk of documentation into an AI tool. It comes back cleaner. Tighter. More readable. The sentences behave themselves. The tone sounds like someone who drinks water and meets deadlines.

👉🏾 You think, “Well, that’s better.”

Then you look again.

👉🏾 The warning is gone.

👉🏾 The prerequisite has been politely implied into nonexistence.

👉🏾 The conditional step has been simplified into something that would work beautifully in a world where nothing ever goes wrong.

Nothing looks broken. And yet, everything is just slightly… off.

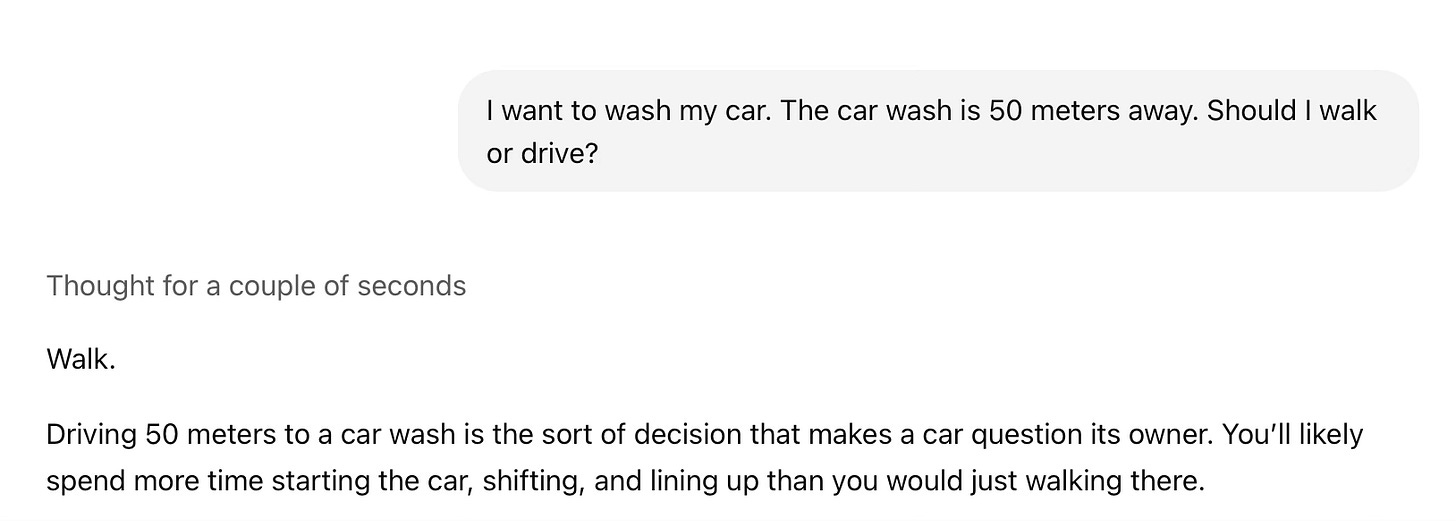

This is what some call the car wash error.

What Is The Car Wash Error?

It’s not a formal term. We won’t find it in industry research papers. It’s a practical observation from people who’ve watched content go into a machine and come out looking cleaner while quietly losing the important parts; the ones that mattered.

Put simply, the car wash error happens when AI makes content smoother and easier to read, but not helpful in real-world situations. The content goes in with dents and bugs 🐞 on the windshield. It comes out polished.

But then, somewhere along the way, a side mirror falls off.