Models, Apps, and Harnesses: How Tech Writers Should Select AI Tools

A practical way to think about AI tools based on how your work actually gets done

Tech writers are living through a super strange moment. We’re supposed to calmly evaluate AI tools while the rest of the software world sprints from headline to headline shouting about “breakthroughs,” “agents,” and “the end of work as we know it.”

Meanwhile, we’re just trying to decide which tool helps us update documentation that aligns with our style guide.

So the reasonable question remains: Which AI should a technical writer use?

That question used to be simple. “Use ChatGPT” was a complete sentence. And one that most people understood. Today, that answer feels like saying “Use the internet.” It’s technically correct, and yet, wildly insufficient advice.

Ethan Mollick’s article, A Guide to Which AI to Use in the Agentic Era, provides one of the clearest explanations of why this question has become more complicated — and advice on how to think about it in a way that won’t fall apart the next time a model gets renamed. Mollick is a professor at the Wharton School of the University of Pennsylvania.

Source:

What follows is a summary of his core ideas, hopefully useful to tech writers.

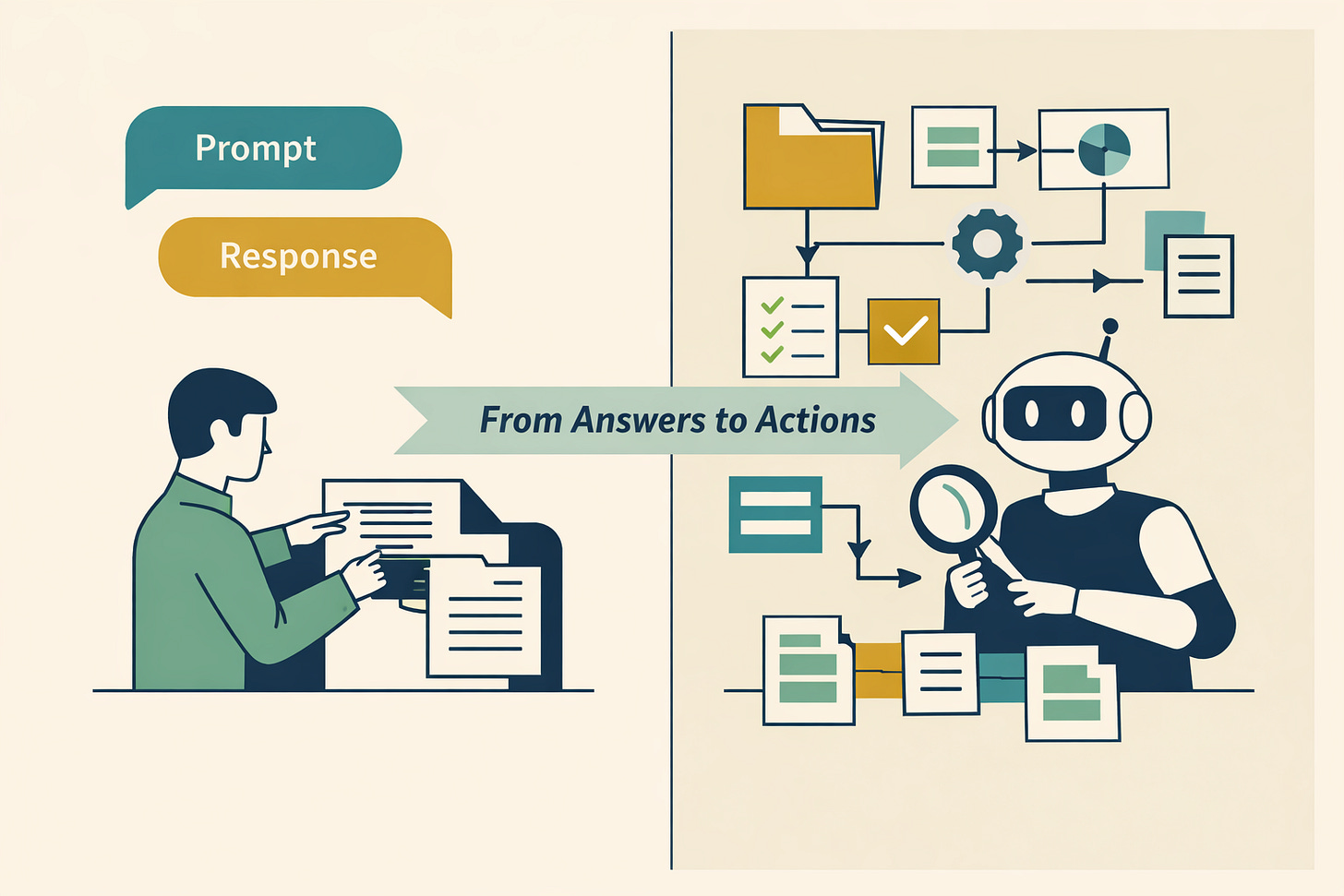

The Big Shift: From Answers to Actions

For a while, AI tools functioned mostly as conversational partners. You typed a prompt, the system generated text, you refined the prompt, and eventually you copied the output into your documentation tool. Then you quietly edited it because you are a professional and the AI is not.

That’s still happening. But it’s no longer the most important development.

Related learning: Conversational Design Institute’s Mastering Content Structure for LLMs online course.

What’s changing is that AI systems are increasingly designed not just to generate responses but to take actions. They can retrieve information, examine files, plan multi-step tasks, execute instructions across several stages, and interact with other tools.

Mollick calls this the move into the “agentic era.” Stripped of jargon, it means AI is becoming less like a chatbot and more like an assistant that can operate a toolkit.

For professional tech writers, that distinction matters. Writing documentation has never been about producing paragraphs. It involves gathering scattered information, reconciling contradictions, restructuring messy subject matter expert (SME) input, validating accuracy, applying standards, and ensuring consistency across a content set spanning hundreds or thousands of topics.

The real opportunity isn’t that AI can draft a procedure in seconds. The opportunity is that AI can reduce friction throughout the documentation workflow.

A Practical Way to Think About AI Tools

Mollick offers a simple but powerful lens for understanding the AI landscape. Instead of treating “AI” as a single category, he separates it into three layers: models, apps, and harnesses.

This isn’t an academic framework. It’s a decision-making shortcut. And once you understand the distinction, the “Which AI should I use?” question becomes much more manageable.

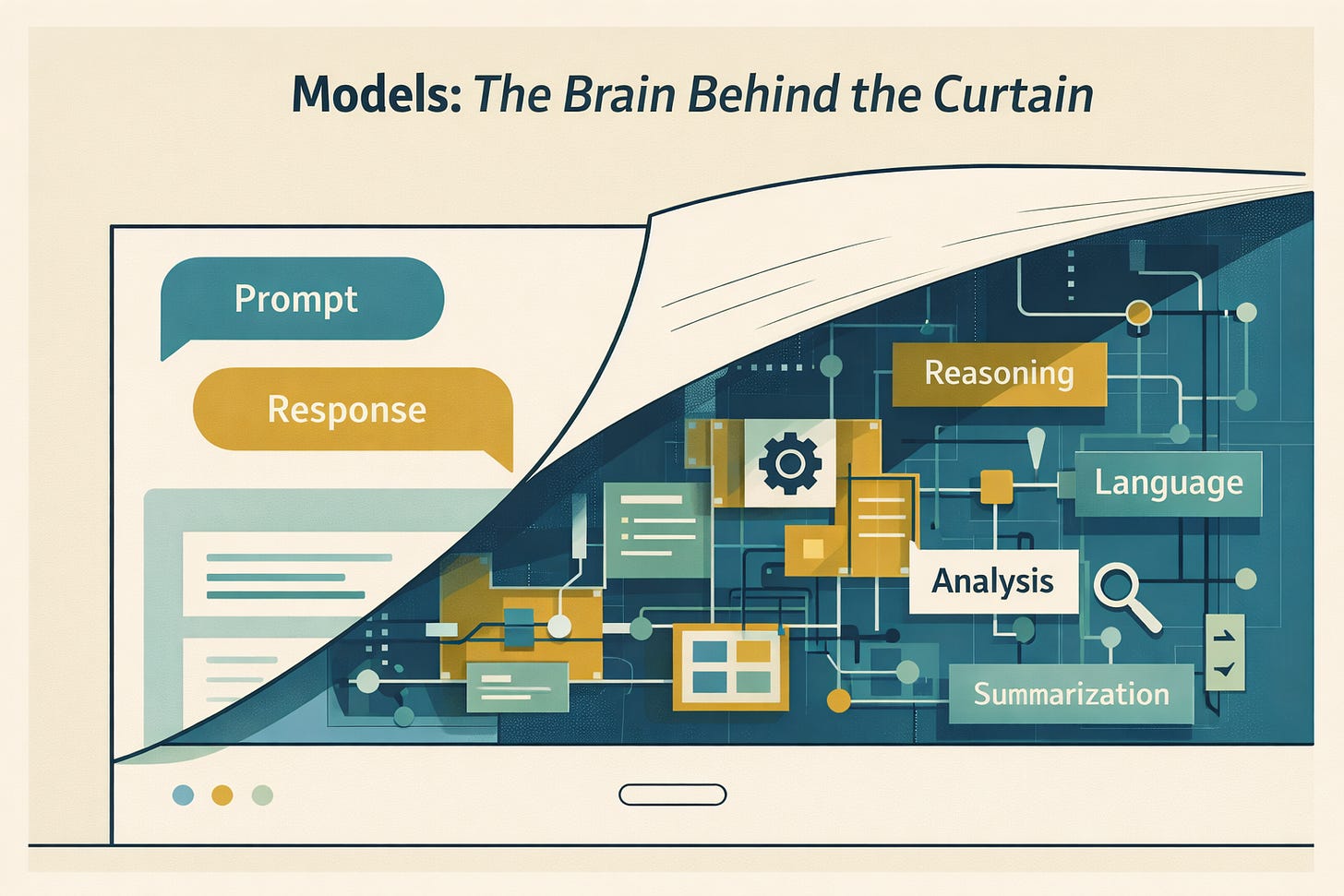

Models Matter Because They Determine Baseline Capability

The model is the underlying engine that performs reasoning, language generation, summarization, and analysis. It’s what people refer to when comparing ChatGPT, Claude, or Gemini.

Models matter because they provide capability.

Some are better at reasoning, while others handle longer contexts more gracefully. Some produce more concise prose than their competition. And, problematically, some hallucinate in ways that are less obvious than others.

As of February 2026 — an important timestamp, because these names will change — the frontier model competition included offerings from OpenAI, Anthropic, and Google. If you are reading this in 2027 or later, you should assume the specific versions have evolved (they no doubt have). The structural point remains the same at the time of this writing: the leading labs are producing models that are increasingly close in overall capability.

That’s one of Mollick’s more reassuring insights. For most professional tasks, the top-tier models are all very good. The differences exist, but they are narrower than the marketing language suggests.

In other words, most technical writers are not choosing between genius and incompetence. They are choosing between slightly different flavors of extremely capable systems.

That’s a useful perspective.

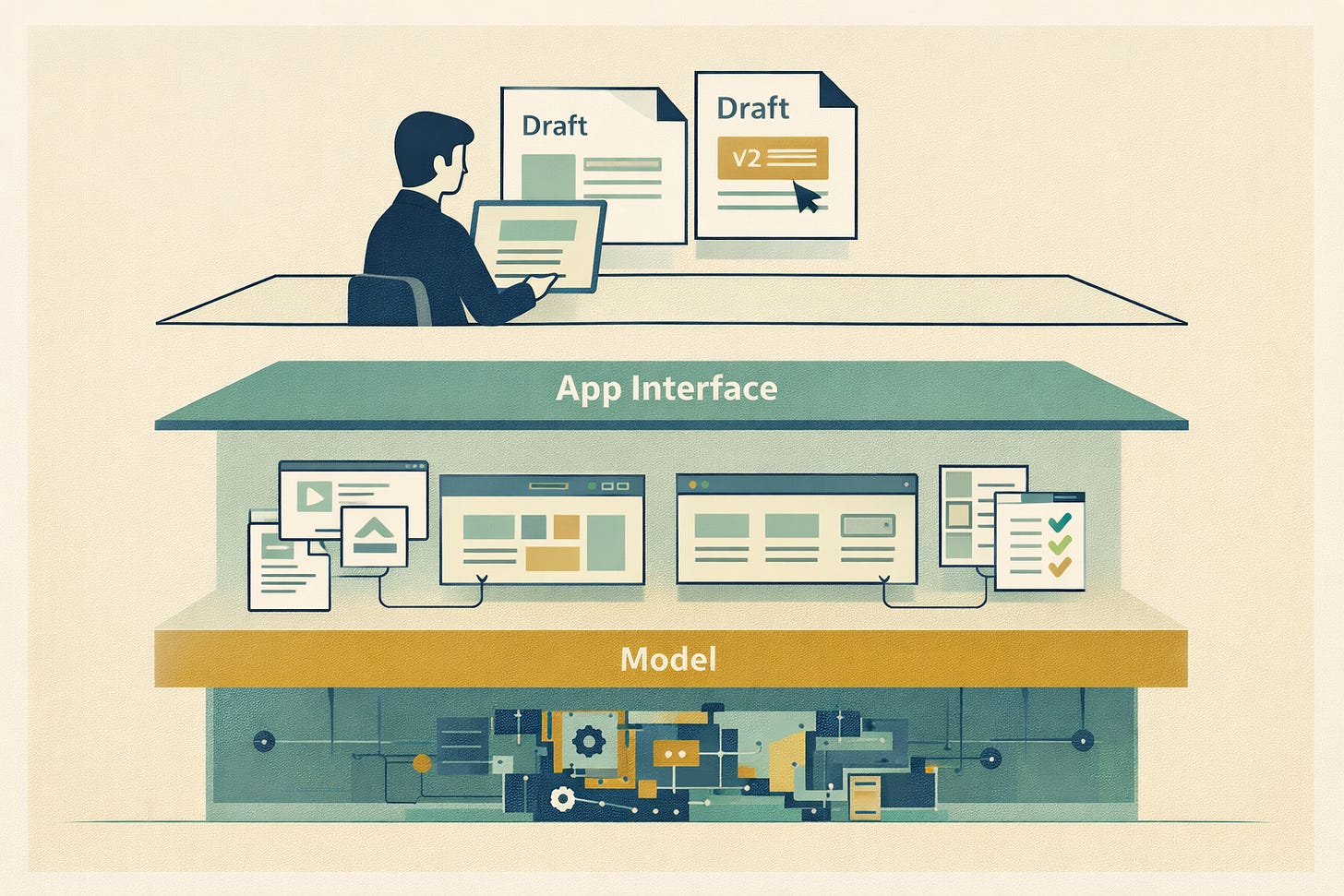

Apps: Where You Actually Do The Work

Here’s where things get more interesting.

You don’t really use a generative AI model directly. You use an application.

ChatGPT, Claude, Gemini, Perplexity, Microsoft Copilot — these are apps. They provide the interface, file upload capability, browsing features, memory behavior, collaboration tools, and subscription tiers that determine what you can — and cannot — access.

Two apps can use comparable models and still feel dramatically different because the experience layer changes everything.

For tech writers, this layer is crucial. Documentation work rarely happens in a single prompt. It involves reviewing drafts, comparing versions, uploading PDFs, checking terminology lists, and iterating across multiple passes. If the app environment makes that awkward, the brilliance of the underlying model won’t compensate.

This is why some writers prefer one tool over another, even when benchmark tests show near parity. The environment shapes how the work flows.

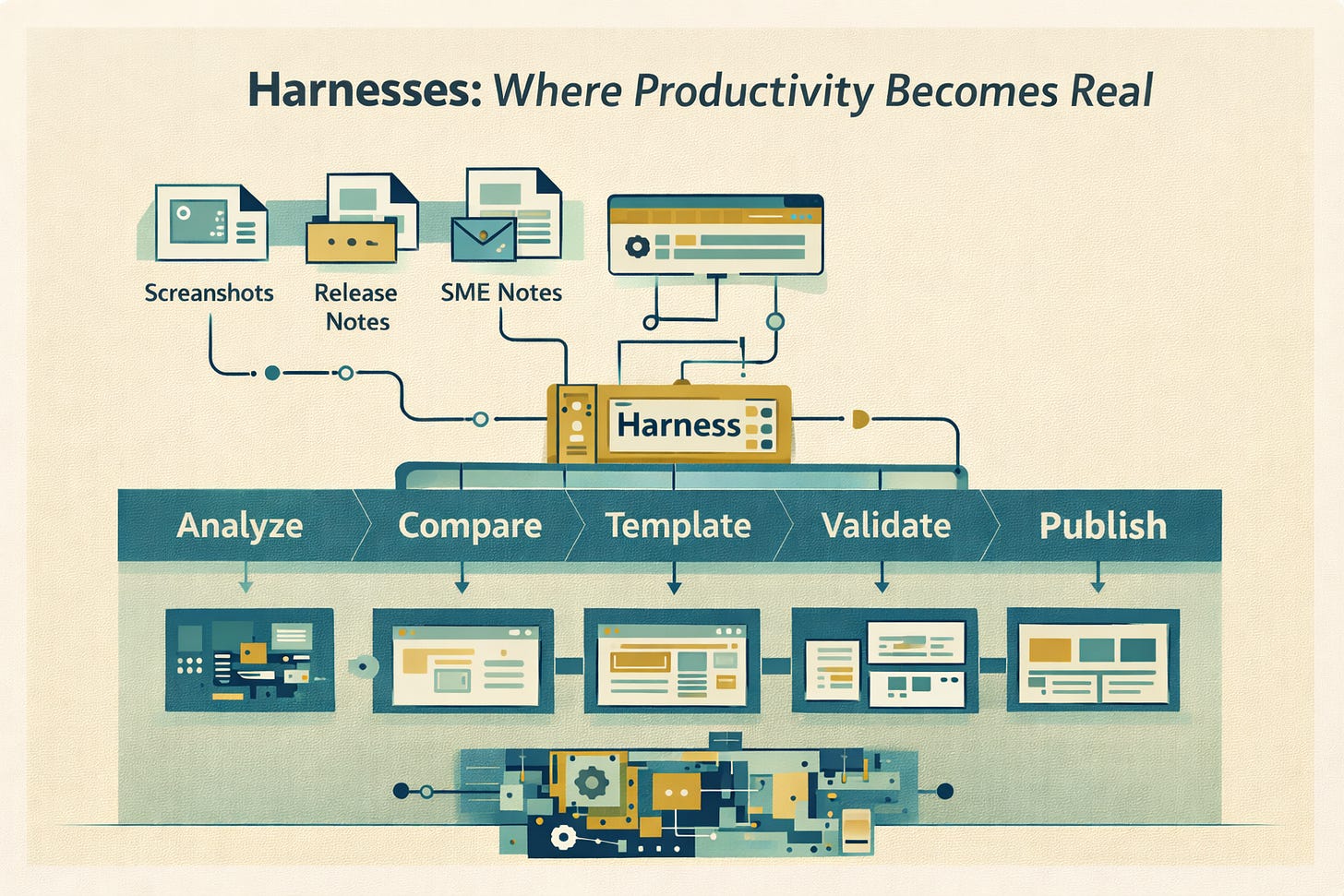

Harnesses: Where Productivity Happens

The most important concept in Mollick’s article, especially for tech doc pros, is the harness.

A harness is the layer that enables AI to do more than produce text.

It allows models to interact with tools, follow workflows, process multiple inputs, and execute multi-step actions. It turns a clever engine into something closer to a digital worker.

And here’s the uncomfortable truth: in many cases, the harness matters more than the model.

The limiting factor in documentation is rarely whether an AI can write a coherent paragraph. The limiting factor is everything around that paragraph — retrieving accurate source material, checking for consistency across documents, applying structured templates, comparing versions, validating compliance with regulations and policies, and ensuring alignment with release notes and interface changes.

If a tool cannot access your source content, cannot analyze multiple files, cannot structure output in reusable formats, and cannot follow a defined workflow, then its intelligence is mostly decorative.

This is where “real productivity” enters the conversation.

What Real Productivity Means for Writers

Productivity is often confused with speed. But speed without accuracy simply accelerates rework.

For producers of technical documentation content, real productivity means reducing the time spent on mechanical tasks that add little value. It means spending less effort reformatting content, less time rewriting the same explanation for different audiences, and less energy converting meeting notes and support tickets into structured documentation.

It also means reducing the cognitive burden of chasing context. Writers routinely spend hours digging through Slack threads, ticketing systems, and email chains to locate the authoritative description of a feature. A strong harness can help ingest, summarize, and reconcile those sources far more quickly.

Real productivity also shows up in fewer revision cycles. When AI can analyze terminology consistency, identify contradictory statements across a set of docs, or flag missing prerequisites before publication, it shifts effort from reactive cleanup to proactive quality control.

Most importantly, productivity for tech pubs teams is about scalability. The holy grail isn’t writing faster; it’s writing once and reusing correctly. Harness-driven AI can help generate structured variants, enforce style rules across large content sets, and surface duplication patterns that would otherwise remain hidden.

That’s not flashy. But it’s transformative.

An Example: Promptitude as a Harness

A clear example of a harness in the documentation space is Promptitude.

Promptitude isn’t a model. It sits on top of models. It packages prompts, workflows, and reusable automation into a repeatable system that teams can operationalize and share.

Consider a common scenario: a product team releases an updated graphical user interface. Buttons move. Labels change. A new feature appears in a redesigned panel. Traditionally, a tech writer manually compares screenshots, identifies changes, and rewrites the affected documentation sections.

With a harness like Promptitude, a writer can upload screenshots of the updated interface and generate draft doc updates based on the interface changes. The value is not simply that the AI can compose sentences. The value is that the system can ingest source artifacts, apply a structured workflow, and produce output aligned with your documentation standards.

That is the distinction between “AI that writes” and “AI that improves productivity.”

And it illustrates precisely why harnesses may matter more than the underlying model. Even the most advanced model is limited if it lacks a structured way to operate on real inputs and produce repeatable outputs.

The Skill That Matters Now: Managing AI, Not Just Prompting It

One subtle theme in Mollick’s article is that prompting alone is no longer the defining skill.

In the agentic era, professionals must think in terms of delegation and orchestration. You define the task, specify the constraints, provide the source material, and verify the output. You treat the AI less like a search box and more like a junior assistant who is extremely fast and occasionally overconfident.

For technical writers, this shift is almost natural. The discipline already revolves around workflow design, content governance, structured standards, and quality control. Managing AI outputs fits neatly into that existing skill set.

The question isn’t whether writers can adapt. It’s whether organizations will recognize that documentation professionals are uniquely positioned to manage AI responsibly and effectively.

So Which AI Should You Use?

After all of this, the original question still hangs in the air.

Which AI should a technical writer use? The answer is less about brand names and more about layers.

The model should be capable and reliable. The app should support your working style. And the harness—if you want real productivity—should enable structured workflows, multi-source input, and repeatable automation.

Instead of asking which AI writes the best paragraph, ask which AI reduces the number of steps between raw information and publishable documentation. Because that is the real job.

AI is no longer a single product. It’s a stack. And technical writers are uniquely qualified to evaluate stacks — because we’ve been managing complexity all along. 🤠